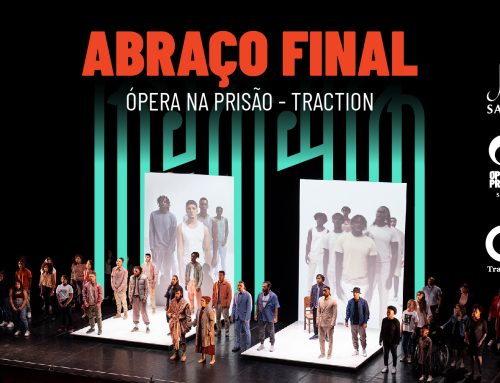

Traction pilot activities

Testing the mediavault and performance engine

Authors: Alina Striner, Thomas Rӧggla, Stefano Masneri, Mikel Zorrilla, Irene Calvis, Paulo Lameiro, Pedro Almeida and Pablo Cesar

A main goal of the TRACTION project is to use technology to support co-creation and consumption of opera content by diverse communities across the European Union. After a lengthy design and development process, we evaluated the Mediavault and Performance engine through a series of usability tests paired with in-depth interviews.

Usability Study: A research technique to evaluate the design of a system, product, or experience by testing it directly on users. The goal of usability testing is to measure how well a design meets its intended purpose, and how intuitive it is for users with no prior experience to learn to use the tool.

In-Depth-Interview: A technique to discover the respondents’ perceptions or to probe into a subject to explore nuances and details. In-depth interviews vary from informal conversations to more formal interviews, which may be unstructured, semi-structured or structured.

In this article we present the pilot activities of the two tools. First, we describe the study method and results of the Mediavault, then complement this with the method and results of the Performance Engine. These pilots appraise the usefulness of the tools for co-creation and performance, and provide valuable feedback about development of future iterations of the tools. Findings gathered during the pilot activities will inform the requirements that will be used in the next stage of the toolset design process.

Mediavault

We conducted a Mediavault pilot activity with LICEU trial users in Barcelona, Spain that included Sinea creatives and students from Escola Massana. During the study, participants tested the usability of the interface, and responded to interview questions about the value of the Mediavault as a communication and co-creation tool.

At the beginning of the study, participants were introduced to an interaction scenario that asked them to imagine they were working on a poster for the opera with a partner. As they completed tasks, participants were instructed to “think aloud” by narrating their actions and thoughts, in order to understand their behaviour, goals, thoughts, and motivations.

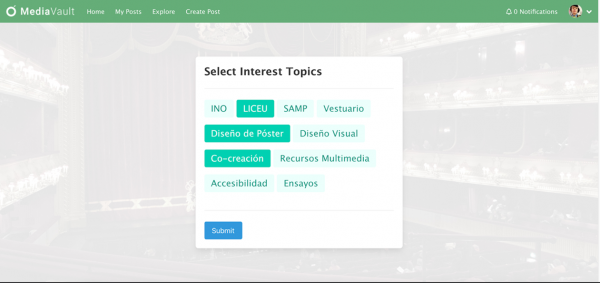

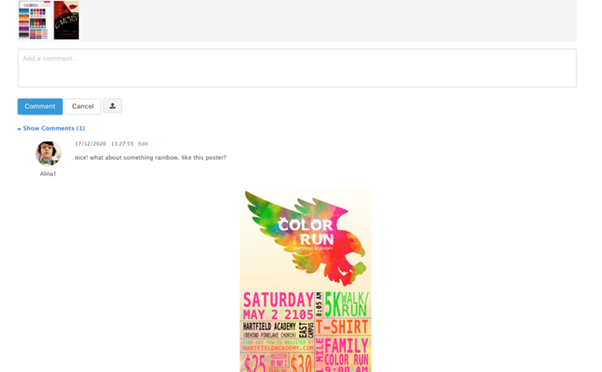

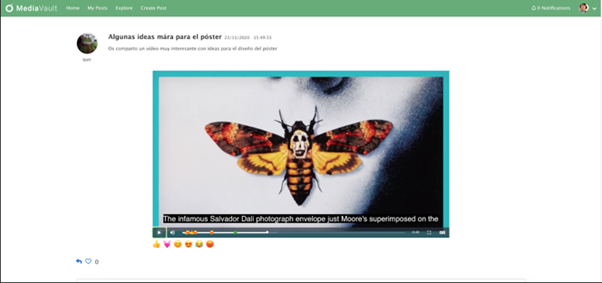

Participants were guided through a set of tasks: First, they created an account, selected their topic interests (figure 1), created a new topic, and responded to comments in that topic (figure 2). Then, users interacted with a visual timeline and commented on a video using emojis and text (figure 3). The facilitator was instructed to minimize helping participants to complete the task, but gave a list of probing questions, in case participants got stuck.

At the end of the study, participants explored the Mediavault system, and answered open-ended interview questions about their experience. The goal of these questions was to consider the value of the Mediavault as a tool for co-creation, and how it could be improved in future iterations. In complement, participants responded to questions from the System Usability Scale (SUS), a standard metric used in usability testing.

Figure 1: Mediavault screenshot of selecting interest topics

Figure 2: Mediavault screenshot of responding to a post with a comment and an image.

Figure 3: Mediavault screenshot of a video with emoji reactions.

Results

Our findings demonstrate that the Mediavault tool was both easy to use and valuable to TRACTION participants. When responding to the SUS questionnaire, Sinea participants gave the tool a 68.75 rating on a 100 point transposed scale. Similarly, Massana participants gave the tool a 72 point rating. This corresponds to an above average rating, relative to scores collected from other design evaluations.

During the study, participants completed all the tasks with minimum prompting. When asked about its value for co-creation activities, all participants responded that the tool was “well thought out,”(L16) as well as “useful and clear”, particularly because it was “focused on the co-creation process.” Further, when comparing to existing tools, participants felt the Mediavault was more simple.

Study participants gave thorough feedback about the visual design of the interface; menu, search, and tagging capabilities; and about the user profiles. This included changing the colours and visual layout, making the menu and tagging features more clear, and adding more functionality to the search feature and user profiles. Participants wanted larger images, less text, predefined tags in the posts, and the ability to comment on posts with emoji reactions. In the timeline view, participants expressed the desire to search by words, dates, users, and types of content. Participants also wanted to see the profiles of other users.

Performance Engine

We conducted a Performance Engine pilot activity with SAMP trial users in Leiria, Portugal. Similar to the Mediavault test, participants first tested the usability of the interface, responded to the SUS questionnaire, and responded to open-ended interview questions about the value of the Performance Engine as a live media tool. Participants were likewise instructed to “think aloud” by narrating their actions and thoughts, in order to understand their behaviour, goals, thoughts, and motivations.

At the beginning of the study, participants were introduced to an interaction scenario in which they were responsible for operating the Performance Engine on the day of the opera. They were told that it was important to make sure that the camera and displays worked, and that remote participants could join the show. Then, during the study, participants checked the cameras and start displays, checked the connection with remote users, navigated from one scene to the next, browsed to the end of the performance, changed the layout of a display, showed different components, and loaded an existing template.

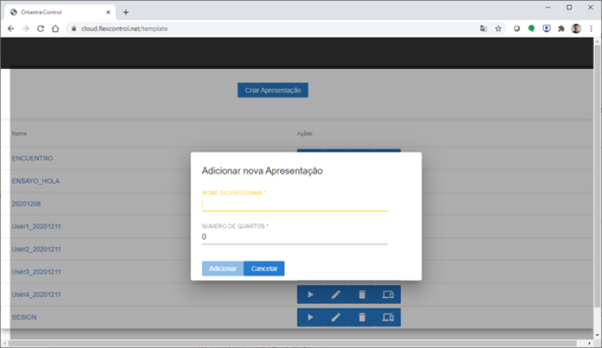

Figure 4: Performance engine interface for creating a new screen.

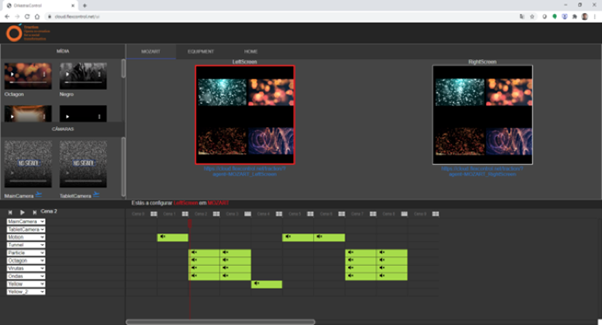

Figure 5: Performance engine main interface.

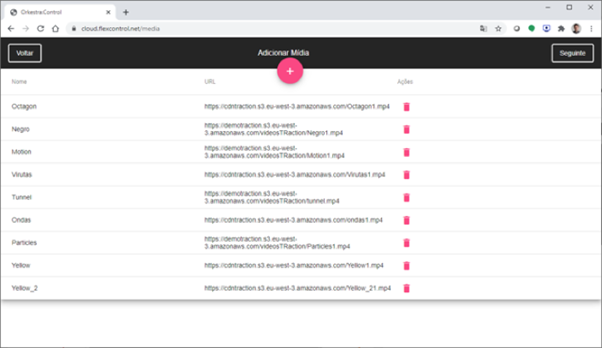

Figure 6: Performance engine interface for uploading content to be used in the show.

Results

Overall, SAMP participants found the performance engine intuitive and easy to use and gave the tool a 93.75 SUS rating on a 100 point transposed scale. This corresponds to a well above average rating, relative to scores collected from other design evaluations. Due to time constraints, we conducted a preliminary qualitative analysis of the Performance Engine pilot activity, with the plan of conducting a more detailed analysis in the future.

Participants responded to different aspects of the user interface. We found that users made some assumptions that did not match the behaviour of the interface. For instance, users had trouble understanding the media component functionality, and the replacing of the components in different scenes. Similarly, they were unclear how time was represented in the timeline, and wanted to pause the videos, which was not possible in the current state of the interface. Users suggested improvements to the interface that included changing the layout icons, and having the vertical bar in the timeline show how much time has passed since the beginning of the scene. As with the Mediavault, the usability issues we collected will inform requirements for future iteration of the Performance Engine tool.